In 2012, NASA’s Curiosity Rover landed on Mars. Although not described this way, it is an Edge device to the extreme.

The Curiosity Rover’s home is 33.9 million miles from earth. But just like any other Edge device, such as your mobile phone or tablet, it has a locally embedded computer in its system that can perform a variety of different functions and actions to support its mission exploring the planet. At the same time, this system can accept periodic software updates to increase its capabilities and learn more. This is the essence of what companies like GE are doing at the Edge to improve the performance, efficiency and capabilities of the billions of industrial machines and systems that run and manage life itself on our planet.

The distance might not be as great, but our ambitions for how much capability and value we can deliver to our machines with Edge Computing are much greater.

Edge Computing, sitting at the peak of the Gartner hype cycle, can be simply defined as the establishment and delivery of computing capabilities to the periphery of a networked system in order to improve the performance, operating cost and reliability of an application or service. Simple enough, but the true excitement around Edge Computing and the Industrial Internet is what it solves and makes possible such as:

- Removing problems that have plagued embedded systems (e.g. cost, flexibility)

- Simplifying embedded development while allowing specialization to thrive

- Extending the reach and benefits from continuous integration & delivery (CI/CD)

- Creating future proof industrial software that can deliver secure over-the-air updates

- Delivering advanced analytics while meeting size, weight and power constraints

- Providing a unifying platform for delivering applications that deliver business outcomes

While the concept of Edge Computing has had time to mature and make it out to the product floor, the uses and strategies of these new offerings are still in their infancy with interesting areas for exploration and exploitation.

Where is this going?

There is a lot of ground to cover in the realm of Edge Computing; to synthesize the technology, I will layout typical use cases and interesting, real results through a series of articles. At first, these articles will be instructive, “reading you in” to the effort at GE and other places. Then, over time, as you become immersed in all aspects of Edge Computing and start to see its fingerprint everywhere, we will shift to strategy and business aspects related to generating value. As we proceed, I look forward to the discussions and learnings this will spark with those that are new to the field and those that have been working in the area for some time.

With that lets get started, and that requires a return trip from the edge of Mars.

Need to be ready to change

One of my favorite Edge examples to highlight is the one I mentioned at the beginning of this post, NASA’s Curiosity rover. Now one might expect that when it landed on Mars in 2012, it was a top-of-the-line NASA exploration vehicle packed with computing power. But the truth is that because of the severe environmental conditions and SWAP-C constraints (you’ll be hearing more about this later), the processor on board was something that passed for a computer back in 1995. So, upon landing on the surface of Mars, an over-the-air (OTA) software update was installed. That update provided a ‘brain transplant’ with the new software providing capabilities more suited to exploring the surface of the planet versus safely landing. The distance involved in this OTA update dwarfs a simple iPhone update from any two extreme points on earth.

A secure embedded computing platform operating at the edge of a network performing mission-critical functionality with the ability to be remotely updated … game changer.

GE may not yet be dealing with interstellar distances; however, we have assets in power-generation all over the globe. These assets include aircraft engines, combined-cycle plants, energy storage, and wind-farms. These assets need to operate reliably and safely over long periods of time. It isn’t economically or practically feasible for us to send a fleet of technicians out on a weekend (or any other time) to climb towers, with drills and USB sticks, updating software to fix a defect or provide enhanced operational capability every time we would like. This is one of the reasons the Industrial Internet is so important. There is no Industrial Internet without Edge Computing.

Laws that guide the Edge

If you have been following technology trends, you can’t help but feel the pull towards Cloud Computing. The cloud has all the answers, it is omnipresent, it doesn’t rest, it’s low cost, it’s easy, and a wonderful floor polish/desert topping to boot. So why do we need Edge Computing? Well, it turns out for some applications there are laws of physics, economics and policy that can’t be violated. To understand why everything can’t be done in the cloud, we need to dig into these “laws” a little deeper.

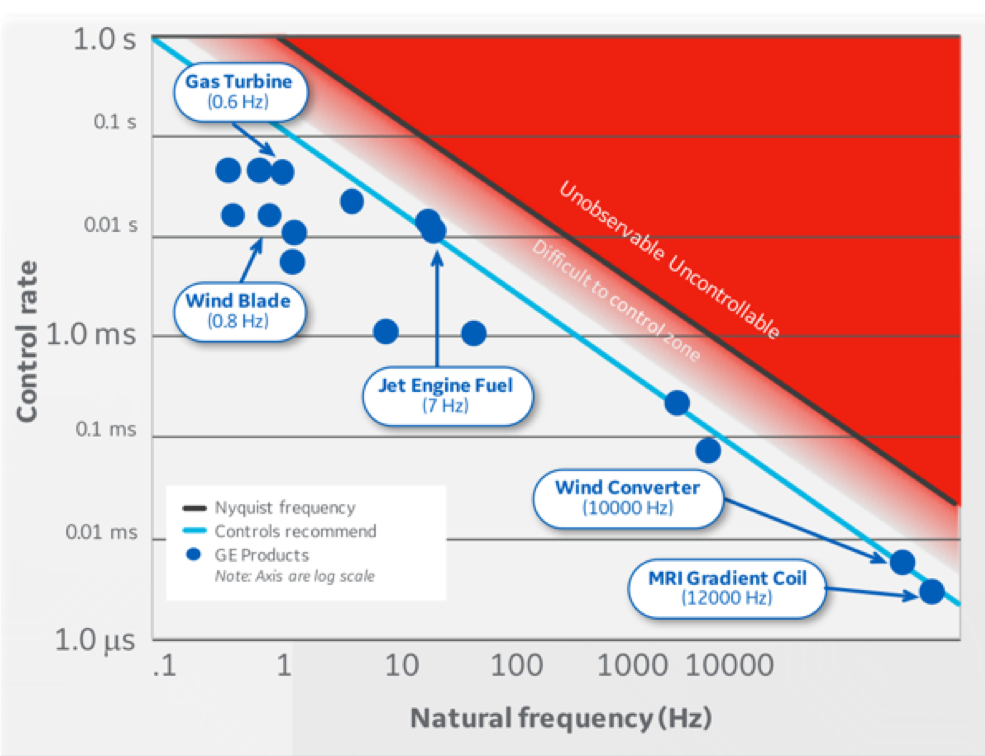

Figure 2. Physics Drives Computation to the Edge

Controlling an industrial machine has many challenges including safety, security and maintaining deterministic behavior. The graph in figure 2 represents one criterion and a guideline for being able to control a system at the edge. The x-axis is the natural frequency or how fast a system can move (slow things on the left, fast things on the right) and the y-axis shows how quickly or how often you need to sample or measure a system to allow you to “See” it accurately. The black line is the Nyquist sampling criterion that says you have to measure a system twice as fast as it can go through a cycle. For example, if a system can go through a cycle in 1 second, you need to measure it every 0.5 seconds to be able to tell its frequency (this is the black line and the red area of the plot). The issue is that to control a system you need to not only be able to tell its frequency, you also need to understand how it changes during the cycle. The guideline for controlling a system is that you need to sample about 20 times per cycle (this is the blue line on the plot). So for a relatively slow system that goes through 1 cycle per second, you need to sample 1/20 second or every 0.05 seconds! As an example, a wind turbine with a blade that is 80 meters long (very big) has a natural frequency of about 1 cycle per second. For you to control and keep that system stable, you would need to See (sample and measure the system) – Think (compute what action to take) and – DO (move an actuator or something in the system) every 0.05 seconds. You need to “See, Think, Do” always.

Another observation that follows the law of physics and economics causes data accumulated at the edge to behave as if it has “gravity”. This gravity will attract applications and services with latency and throughput requirements unsupportable in a cloud solution and where the cost of transmission of that data to the cloud would be prohibitive. In the past, much like the Curiosity rover story above, systems resources have been limited, accessibility to these systems has been restricted because of safety and security concerns and programming models have not kept up with the state of the art elsewhere. The result was a proliferation of application-specific “boxes” where system architectures grew threw accretion. In some cases, we’ve seen as many as seventeen of these “boxes” in a control room computer closet.

Data at the edge is sometimes governed by regulations or policy (e.g. GPDR). These regulatory requirements can prevent data from being removed from a facility or, in some cases, remote access to the system. In these situations, there is even more motivation for keeping the computing close to the source of the data.

Tune in next time

So, there you have a sampling of the things that continue to motivate discussion and innovation in this area. As I mentioned above, this is the first in a series of posts around the topic. In the next post, we’ll begin to build up the requirements and dive into some of the design philosophy we developed at GE as our thinking on Edge Computing matured. Thanks for reading this far, and please feel free to provide feedback to help shape future posts.

About Me

For the majority of my career, I’ve witnessed the evolution of Edge Computing while building safety and mission critical embedded systems and applying them in complex “system of systems.” Currently, as Chief Engineer in the Edge Computing lab at GE Research, I’m on the front-lines of the development of industrial software and networking to enable pursuits in the Industrial Internet of Things.

References

-

https://www.techradar.com/news/world-of-tech/curiosity-rover-gets-over-the-air-software-update-on-mars-1091943

-

http://www.nasa.gov/mission_pages/msl/multimedia/gallery/pia14175.html, Public Domain,

-

https://commons.wikimedia.org/w/index.php?curid=23016064

We're here to solve your toughest problems.