GE Reports: What are you hoping to accomplish with these three technologies?

Adeline Digard: We want to simplify and improve the workflow for our customers by automating it as much as possible — to make them more efficient and better able to focus on treating their patients.

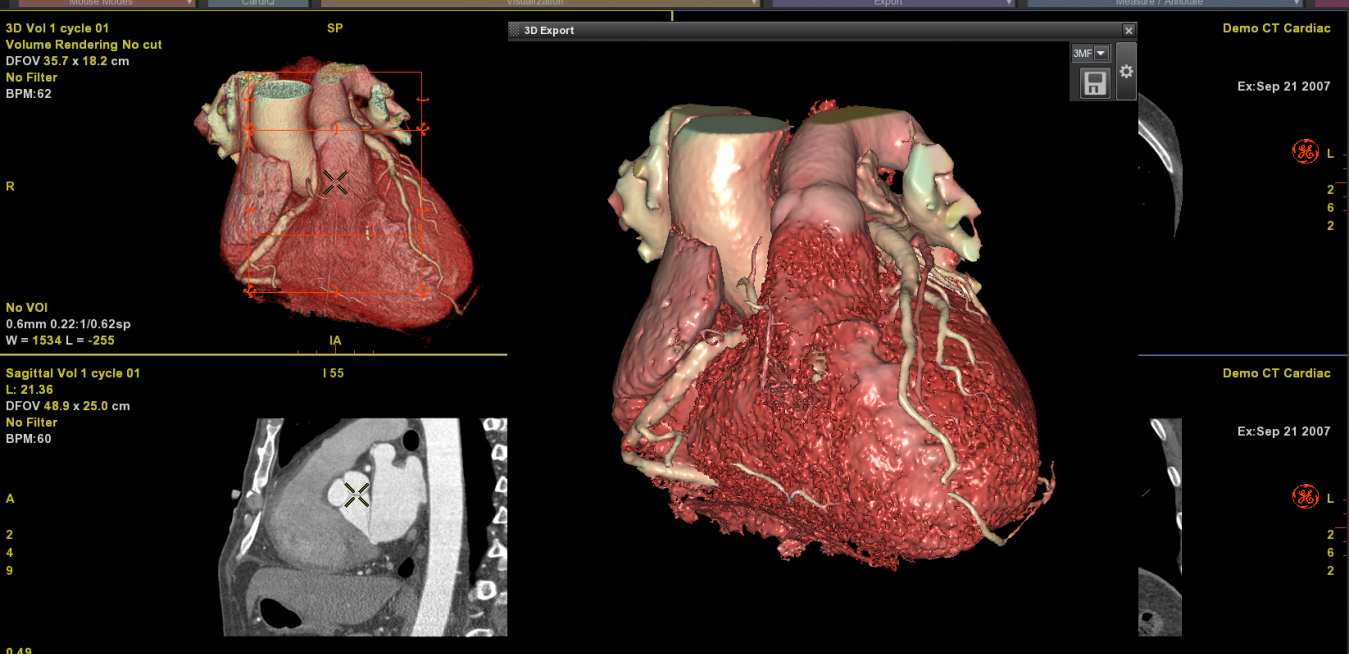

Take 3D printing, for example. What patients and doctors both want is a quick and accurate diagnosis. Doctors also want to educate their patients, explain their conditions and how they will be treated. Here is how it works: A patient comes in and is scanned. The images are then processed by our software to highlight anatomical structures and lesions. We have developed a suite of high-performance analysis tools which automate routine tasks for radiologists, such as automatically detecting vessels and bones, and spotting and measuring lesions, as well as labeling the vertebrae.

It also allows instant disease comparison so doctors can see how lesions spread or shrink on a patient over time. Areas of interest can be color-coded for further exploration or attention. Then the physician can print out a 3D model so they can see it for themselves — and show the patient.

Above and top image: A patient is scanned. The images are then processed by GE's software to highlight anatomical structures and lesions. It is then printed as a 3D model to show the physician — and patient — so they can see the issues as they grow or shrink over time. Image credits: GE Healthcare.

Above and top image: A patient is scanned. The images are then processed by GE's software to highlight anatomical structures and lesions. It is then printed as a 3D model to show the physician — and patient — so they can see the issues as they grow or shrink over time. Image credits: GE Healthcare. GER: Why do they care?

AD: This advanced visualization software allows for easy segmentation, so you can pick a very specific piece of anatomy to be transformed into a mesh — a three-dimensional rendering on the computer. Until now, it was very difficult to select a heart, vessel or piece of the spine and then print it. But now you can do just the coronary artery, or two of the four chambers of the heart, or whatever it is the physician needs. Doctors can select what they need and send it to a 3D printer, which prints it right on-site. It offers a way to gain a better understanding of the impact of the disease on the heart, for example.

It also helps during discussions with the patient, so that they can be fully informed about their disease and better participate in treatment decisions. It can also help the surgeon visualize various solutions, so they can pick the one that’s best in each individual case. And even allow them to practice directly on the printed model in cases of complex surgery.

GER: What are you working on now in virtual reality?

AD: We have partnered with two surgeons and provided them with prototypes of our VR system. The idea is to let them play around with this equipment in a clinical situation so they can determine which kind of clinical cases can get the most benefit from VR, and how it can be used to improve the outcomes of their patients.

The first surgeon is focusing on heart disease, specifically the mitral valve, which is located in the heart between the two ventricles. We think VR can be useful here since a failed or failing mitral valve can be replaced, but it's tricky. Sometimes it’s not easy to see the mitral valve correctly, because of human anatomy. It’s easier to find the mitral valve with VR than looking on that flat monitor. After surgery, one of the side effects can be the valve leaking or failing, and again, because you can move all around and look from every angle, it’s easier to identify the problem.

The second one is a thoracic surgeon who is using the prototype to see how the ability to use virtual reality would have changed the way he treated his patients. Maybe he could use it to cut back on unpleasant side effects, such as excess skin removal, or reduce time in surgery because he is able to prepare better or work more efficiently. He’s selected some critical cases and, in a double-blind review with another physician, they are looking to see if the surgery approach would have been different if they had been able to use virtual reality prior to it.

Two surgeons have partnered with GE Healthcare's Advanced Visualization teams to test drive its VR system. The goal is to have better patient outcomes and see how this system can be implemented into each surgery's workflow. Image credit: GE Healthcare.

Two surgeons have partnered with GE Healthcare's Advanced Visualization teams to test drive its VR system. The goal is to have better patient outcomes and see how this system can be implemented into each surgery's workflow. Image credit: GE Healthcare.GER: How about artificial intelligence? What’s happening there?

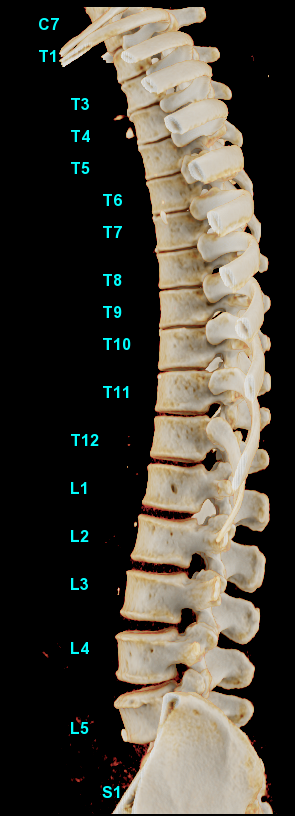

AD: Let’s start with a simple example, one where AI improves the daily workflow and makes doctors more efficient. Say we want to make it easier and faster to identify problems with the vertebrae. To do that, we need to build a robust algorithm that can sift through the image data and automatically display the relevant information for the diagnosis. We start by teaching it what “normal” is — so eventually it can search for and identify abnormal. That means feeding the algorithm with the widest number of cases possible to teach it to identify all the vertebrae.

When it can, we bump it up a notch, this time adding a pathology, such as scoliosis. We keep adding more cases to “teach” all kinds of anatomies and conditions until we’ve built this massive database that the algorithm accesses. So when we’re finished, we have an algorithm that’s been trained on an extraordinarily large and complicated database, and 95% of the time, despite however many complexities, it can still visualize the vertebrae correctly from neck to bottom. That’s called deep learning.

With deep learning, this advanced visualization software can use an algorithm on a massive database and 95% of the time, despite however many complexities, it can still visualize the vertebrae correctly from neck to bottom. Image credit: GE Healthcare.

With deep learning, this advanced visualization software can use an algorithm on a massive database and 95% of the time, despite however many complexities, it can still visualize the vertebrae correctly from neck to bottom. Image credit: GE Healthcare. When it’s put to use in a clinical setting — say, helping a radiologist reading a CT scanner — it could save a great deal of time. The machine has already reviewed every orientation of the spine and run through every permutation — much faster than the physician can — and alerted the physician to any abnormalities it’s identified. The AI also automates routine parts of a radiologist’s report. For example, it automatically notes the location and orientation of each image, so the radiologist can skip that vital but tedious part of the report. It can also handle tasks like numbering and describing each vertebra in the lumbar region of a lower back scan.

It could save the radiologist an enormous amount of time for the software to take care of those universal things. That is, in fact, the entire point of AI — to allow physicians to focus their attention on what is critical for their patients, not spend time on the tedious tasks.