They’ve Got Some Nerve

https://youtu.be/PoKcRtDmKJw

What is it? At the University of Michigan, researchers developed a way to tap into “faint, latent signals from arm nerves” in amputees and amplify them “to enable real-time, intuitive, finger-level control of a robotic hand.”

Why does it matter? “This is the biggest advance in motor control for people with amputations in many years,” said Paul Cederna, a professor of plastic surgery and biomedical engineering, and the co-author of a new paper in Science Translational Medicine. “We have developed a technique to provide individual finger control of prosthetic devices using the nerves in a patient’s residual limb. With it, we have been able to provide some of the most advanced prosthetic control that the world has seen.”

How does it work? One challenge to those working on developing mind-controlled prosthetics is picking up a strong enough signal to guide the artificial limb. As Michigan points out in a news release, some methods go straight to the source — the brain — but that can be invasive and risky. Severed nerves in the limb itself are small and unreliable, and there hasn’t been a good way to “listen” to them. The Michigan researchers found that if they surgically attached “tiny muscle grafts” around the severed nerves, though, they could act as amplifiers for the nerve signals that could be passed on to a prosthetic hand. Machine learning algorithms helped translate the signals so participants in an ongoing clinical trial could control their limbs.

Beyond Beans

It looks, smells and tastes like coffee — but is made of something else entirely. Top and above images credit: Getty Images.

It looks, smells and tastes like coffee — but is made of something else entirely. Top and above images credit: Getty Images.What is it? Coffee crops are threatened by climate change, but it may not be time to hit the panic button yet: A Seattle company has developed a way to make coffee “molecule by molecule” — no beans required.

Why does it matter? Digital Trends reports that the two species of beans that constitute most of the world’s coffee — arabica and robusta — are vulnerable to rising global temperatures as well as pests and disease. Demand for coffee, though, continues to rise.

How does it work? According to the folks behind the Seattle startup Atomo, it’s just a matter of figuring out the right blend of chemical compounds: Atomo’s Jarret Stopforth said, “The truth behind it is that there are only a few dozen compounds that actually critically define coffee, without which you wouldn’t know it or perceive it as coffee.” Stopforth, a food scientist, found a way to isolate flavor compounds from other sustainable materials — products that would otherwise go to waste, like watermelon seeds — and combine them just so to get the desired flavor. Atomo has worked out three different varieties of cold-brew: a dark roast, a medium roast, and a smooth roast.

Landmark Findings

The brain's retrosplenial cortex helps us use landmarks in order to navigate. Image credit: Christine Daniloff, MIT.

The brain's retrosplenial cortex helps us use landmarks in order to navigate. Image credit: Christine Daniloff, MIT.What is it? If you know that the way to grandma’s house is over the river and through the woods, you’ve got your retrospenial cortex (RSC) to thank, according to new research from the Massachusetts Institute of Technology — that’s the area of the brain that helps us navigate by landmarks.

Why does it matter? The relationship between the RSC and landmark-based navigation was known previously to neuroscientists, but a new MIT study sheds light on precisely how “neurons in the RSC use both spatial and visual information to encode specific landmarks,” according to a news release. The RSC is an area of focus for neuroscientists studying the brains of Alzheimer’s patients and others with brain damage — who may be able to recognize their surroundings but, if the RSC is damaged, may have trouble navigating them.

How does it work? According to MIT, the RSC creates a “’landmark code’ by combining visual information about the surrounding environment with spatial feedback” — in their study on mice, that feedback reflected the position of the mice along a track. The mice integrated those two sources of information to learn to locate a reward. Lukas Fischer, the lead author of a study in eLife, said, “We believe that this code that we found, which is really locked to the landmarks, and also gives the animals a way to discriminate between landmarks, contributes to the animals’ ability to use those landmarks to find rewards.”

3D Printing + 1

The Lady Liberty image illustrates the capabilities of polymer brush hypersurface photolithography. Fluorescent polymer brushes were printed from initiators on the surface, and variations in color densities correspond to differences in polymer heights, which can be controlled independently at each pixel in the image. Image and caption credit: Advanced Science Research Center.

The Lady Liberty image illustrates the capabilities of polymer brush hypersurface photolithography. Fluorescent polymer brushes were printed from initiators on the surface, and variations in color densities correspond to differences in polymer heights, which can be controlled independently at each pixel in the image. Image and caption credit: Advanced Science Research Center.What is it? Researchers at Northwestern University and CUNY’s Advanced Science Research Center built a nanoscale 4D printer that’s “capable of constructing patterned surfaces that re-create the complexity of cell surfaces.”

Why does it matter? CUNY’s Adam Braunschweig, the primary investigator on the study, said the technique could lead to a slew of potential applications in medical sciences, including drug development, biosensors and optics: “I am often asked if I’ve used this instrument to print a specific chemical or prepare a particular system. My response is that we’ve created a new tool for performing organic chemistry on surfaces, and its usage and application are only limited by the imagination of the user and their knowledge of organic chemistry.”

How does it work? It’s simple, really, according to CUNY: “The printing method, called Polymer Brush Hypersurface Photolithography, combines microfluidics, organic photochemistry, and advanced nanolithography.” The university says that “the novel system overcomes a number of limitations present in other biomaterial printing techniques, allowing researchers to create 4D objects with precisely structured matter and tailored chemical composition at each voxel” — a 3D pixel. Check out the study in Nature Communications.

Thinking Ahead

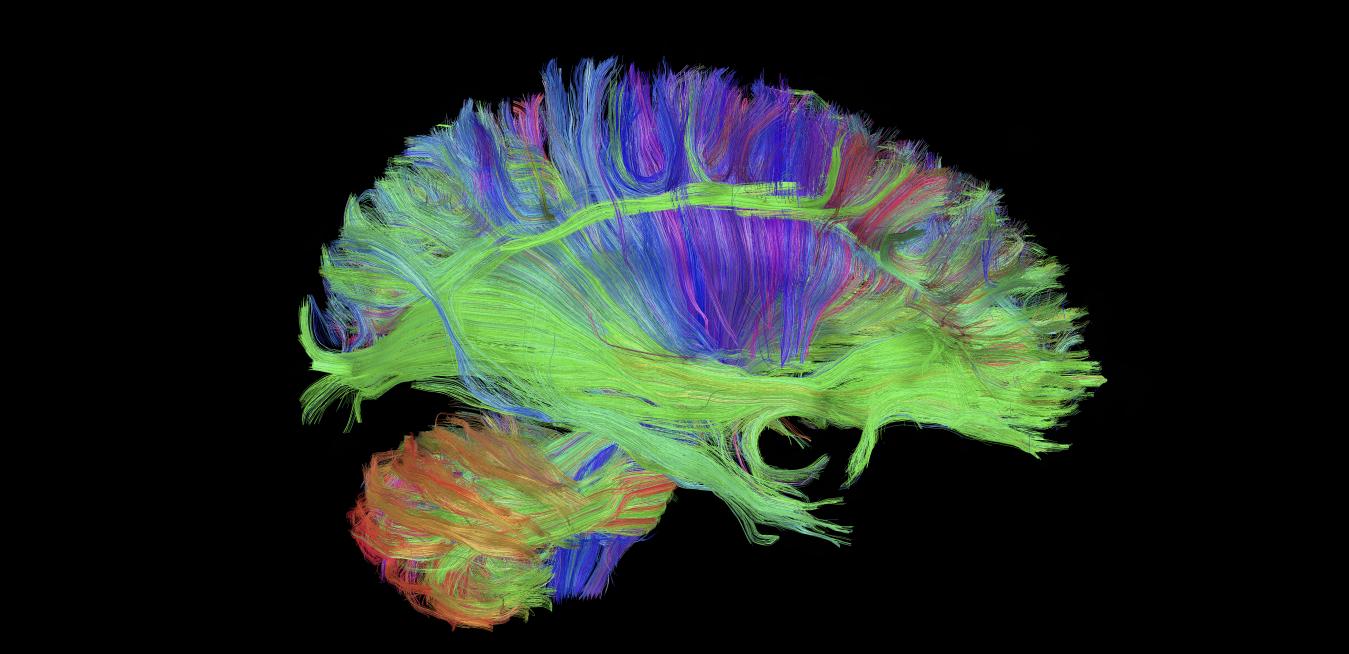

Stanford researchers used brain scans to shed light into the science of going viral on the internet. Image credit: Getty Images. Top image credit: GE Healthcare.

Stanford researchers used brain scans to shed light into the science of going viral on the internet. Image credit: Getty Images. Top image credit: GE Healthcare.What is it? Researchers scanned people’s brains as they watched videos and then, using the brain scans, were able to predict which videos would go viral online. In other words, your brain can predict virality even if you can’t.

Why does it matter? The study was conducted in the lab of Stanford University neuroscientist Brian Knutson, as part of a broader effort to understand brain activity and decision-making. “In many of our lives, every day, there is often a gap between what we actually do and what we intend to do,” Knutson said. “We want to understand how and why people’s choices lead to unintended consequences — like wasting money or even time — and also whether processes that generate individual choice can tell us something about choices made by large groups of people.”

How does it work? Part of Knutson’s work is “neuroforecasting”: using brain data gathered from individuals to forecast the behavior of larger groups. In this study, published in PNAS, subjects’ brains were imaged with fMRI as they watched videos; researchers also took note of their behavior, such as whether they chose to skip videos, and how long they watched. Then they gathered data on how those videos performed online. It turned out that both behavioral observation and brain scans could predict how long online viewers would stick with a video, but only fMRI scans could forecast the popularity of the videos, as measured in views per day.