But the truth is such grim assessments are the products of faulty logic and erroneous empirical analysis. For starters, pessimists are wrong to assume robots will eventually be able to do most jobs, or to fear that once a job is lost there will be no second-order job-creating effects from increased productivity and spending. Beyond that, however, the pessimists’ grim assessments suffer from being utterly ahistorical.

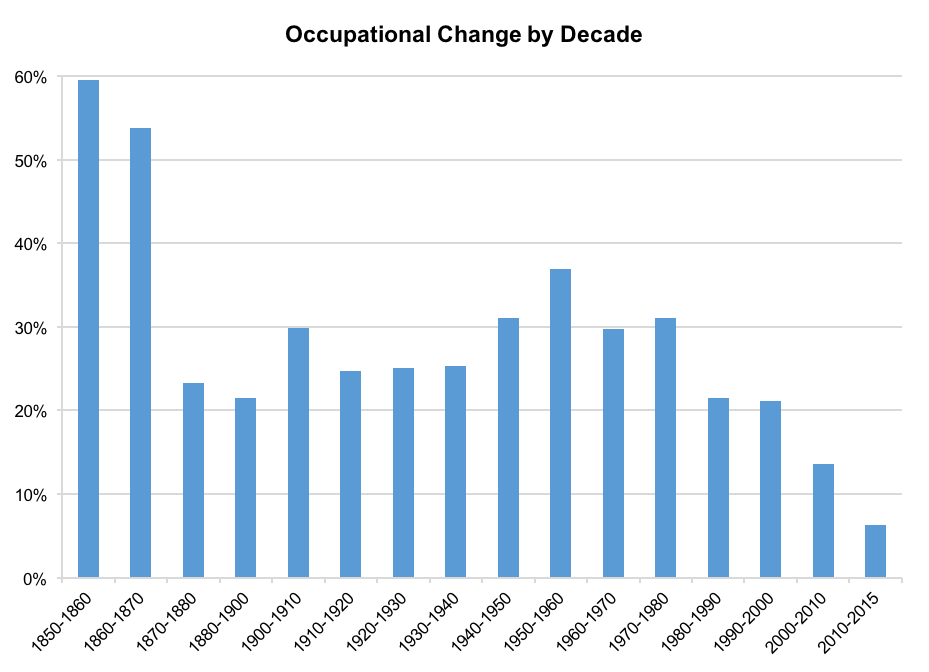

At the Information Technology and Innovation Foundation (ITIF), we have studied 165 years of U.S. employment history, and the surprising reality is that the labor market is not experiencing particularly high levels of job churn, a phenomena in which some occupations grow while others shrink. In fact, it’s the exact opposite: Levels of occupational churn in the U.S. are now at historic lows. In the last 15 years—a period encompassing the dot-com bust, the financial crisis and subsequent Great Recession, and the ongoing emergence of new technologies purported to be more powerfully disruptive than anything in the past—the level of occupational churn has been just 38 percent of the previous 50-year period from 1950 to 2000, and 42 percent of the average level stretching all the way back to the decade before the Civil War.

This counterintuitive reality is critically important for policymakers and the public to understand. If opinion leaders continue to fret that just about everyone’s occupation is destined for the scrap heap of history, then the dominant political instinct will be to sour on technological progress. Society will become overly risk averse, seeking tranquility over churn and the status quo over further innovation. This risk is not theoretical: Some jurisdictions ban ride-sharing apps such as Uber because they fear losing taxi jobs. Even Microsoft founder Bill Gates, respected as he is, has proposed taxing robots like human workers—an idea that defies economic logic, akin to taxing tractors so that horse-drawn plows stay competitive.

In fact, the single biggest economic challenge facing advanced economies today is not too much labor-market churn, but too little, and thus too little productivity growth. Increasing productivity is the only way to increase incomes, reduce inequality, and improve living standards. However, productivity in the last decade has advanced at the slowest rate in 60 years.

For historical context, we reviewed U.S. occupational trends from 1850 to 2015, drawing on Census data compiled by the Minnesota Population Center, a demographic research program at the University of Minnesota. We compared changes in occupational job levels from decade to decade. We also assigned codes to each occupation to judge whether increases or decreases in employment in a given decade were likely due to technological progress or other factors. Overall, three main findings emerge from this analysis.

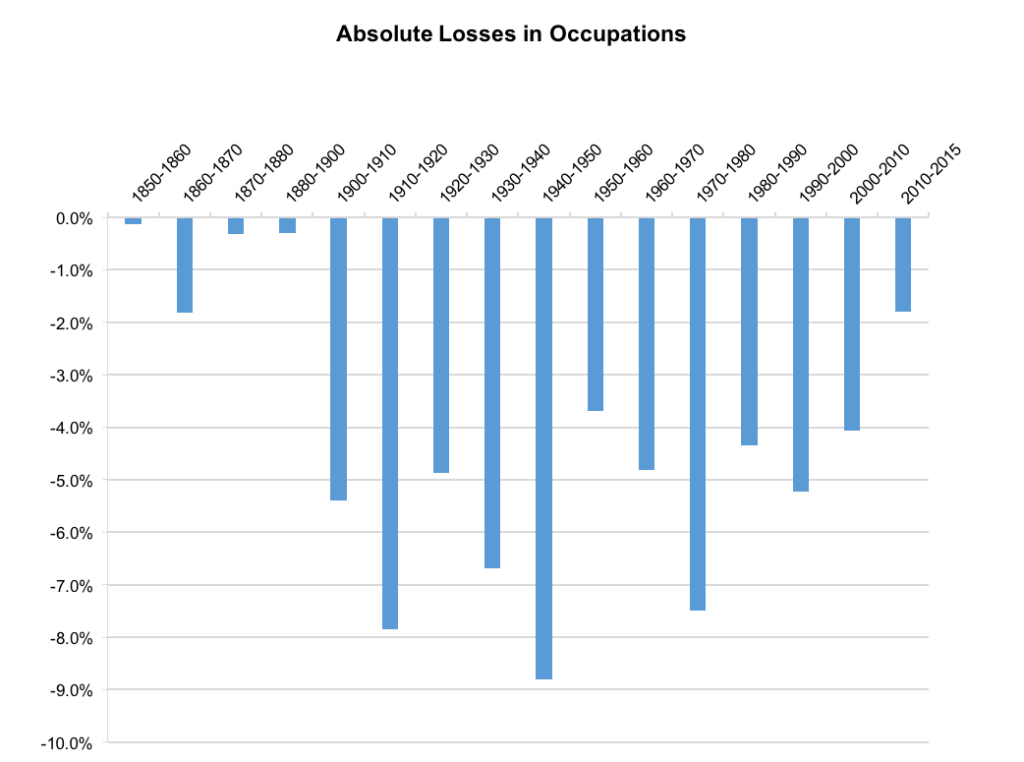

First, the rate of occupational churn in recent decades is at the lowest level in American history—at least as far back as 1850. This is contrary to popular perception. Occupational churn peaked at over 50 percent in the two decades from 1850 to 1870 (meaning the absolute sum of jobs in occupations growing and occupations declining was greater than half of total employment at the beginning of the decade), and it fell to its lowest levels in the last 15 years—to around just 10 percent. When looking only at absolute job losses in occupations, the last 15 years have been comparatively tranquil, with just 70 percent as many losses as in the first half of the 20th century, and a bit more than half as many as in the 1960s, 1970s, and 1990s.

Second, many believe that if we were to accelerate innovation even more, then new jobs in new industries and occupations would make up for any technology-driven losses. But the truth is that growth in already-existing occupations is what makes up the difference (and then some). In fact, in no decade has technology directly created more jobs than it has eliminated. Yet, throughout most of the period from 1850 to present, the U.S. economy overall has created jobs at a robust rate, and unemployment has been low. This is because most job creation that is not attributable to population growth has stemmed from productivity-driven increases in purchasing power for consumers and businesses. This innovation allows workers and firms to produce more, so wages go up, prices go down, and spending increases. This, in turn, creates some jobs in new occupations and even more in existing occupations—from cashiers to engineers. There is simply no reason to believe that this dynamic will change in the future for the simple reason that consumer wants are far from satisfied.

Third, our findings suggest that technology is not destroying more jobs than ever, contrary to the popular view. The period from 2010 to 2015 saw approximately six technology-related jobs created for every 10 lost from technology, which was the strongest ratio—meaning lowest share of jobs lost to technology—of any period since 1950 to 1960.

So, instead of hyperventilating about robots somehow making human labor an anachronism, policymakers, opinion leaders, and the public should take a deep breath and calm down. Predictions that we’re all just one high-tech “unicorn” away from permanent unemployment are vastly overstated, as they always have been.

The real risk for the future is that technological change and resulting productivity growth will be too slow, not too fast. We need to resist the protests of fearful incumbents and craft economic policies that improve people’s living standards by speeding up the rate of disruptive innovation.

Policymakers certainly can and should do more to ease workers’ transitions into new jobs when they lose the ones they have. But that is true regardless of whether the losses stem from technology, trade, or business-cycle downturns. We should equip workers to thrive amid constant occupational churn—and understand that it is a sign of economic progress. Right now, we don’t have enough of it.

This piece first appeared in BRINK.

(Top image: Alex Knight via Unsplash.)

John Wu is an economic research assistant at the Information Technology and Innovation Foundation.

John Wu is an economic research assistant at the Information Technology and Innovation Foundation.All views expressed are those of the authors.