A collapsed lung can feel a little like being trapped underwater. Pneumothorax (as doctors call it) is caused by tears in the lung that leak air into the space between the lung and the chest wall creating an air pocket that places immense external pressure on the lung. As the pocket gets bigger, the more air the patient takes in — making it harder and harder to breathe.

Every year nearly 74,000 Americans suffer from pneumothorax. Fortunately, it is an easily treatable condition, if caught early. Doctors can quickly relieve this pressure by inserting a needle or tube into the air pocket.

But they can’t treat pneumothorax if they’re not certain it’s what is causing the breathing problems. That’s because the feeling of being unable to breathe could alternatively be the result of pneumonia, a heart problem or even a panic attack — conditions that doctors treat in very different manners. So doctors routinely order X-rays on patients they suspect have a collapsed lung. Unfortunately, in most hospitals, it can take two to eight hours for a radiologist to read an X-ray and as pneumothorax grows, it becomes an increasingly life-threatening situation. “Currently, 62% of scans are marked ‘STAT’ or for urgent reading, but they aren’t all critical,” says Jie Xue, president and CEO of GE Healthcare’s X-ray division. “This creates a delay in turnaround for truly critical patients, which can be a serious issue.”

For Dr. Rachael Callcut, a surgeon at University of California San Francisco's Zuckerberg San Francisco General Hospital and Trauma Center and director of data science for the Center for Digital Health Innovation, all this uncertainty made pneumothorax the perfect test case for artificial intelligence (AI) that can read X-rays. “The timing of the diagnosis is important,” Callcut says. “The longer we go without knowing, the higher the risk to the patient. An AI early warning detection system allows us to deliver care sooner.”

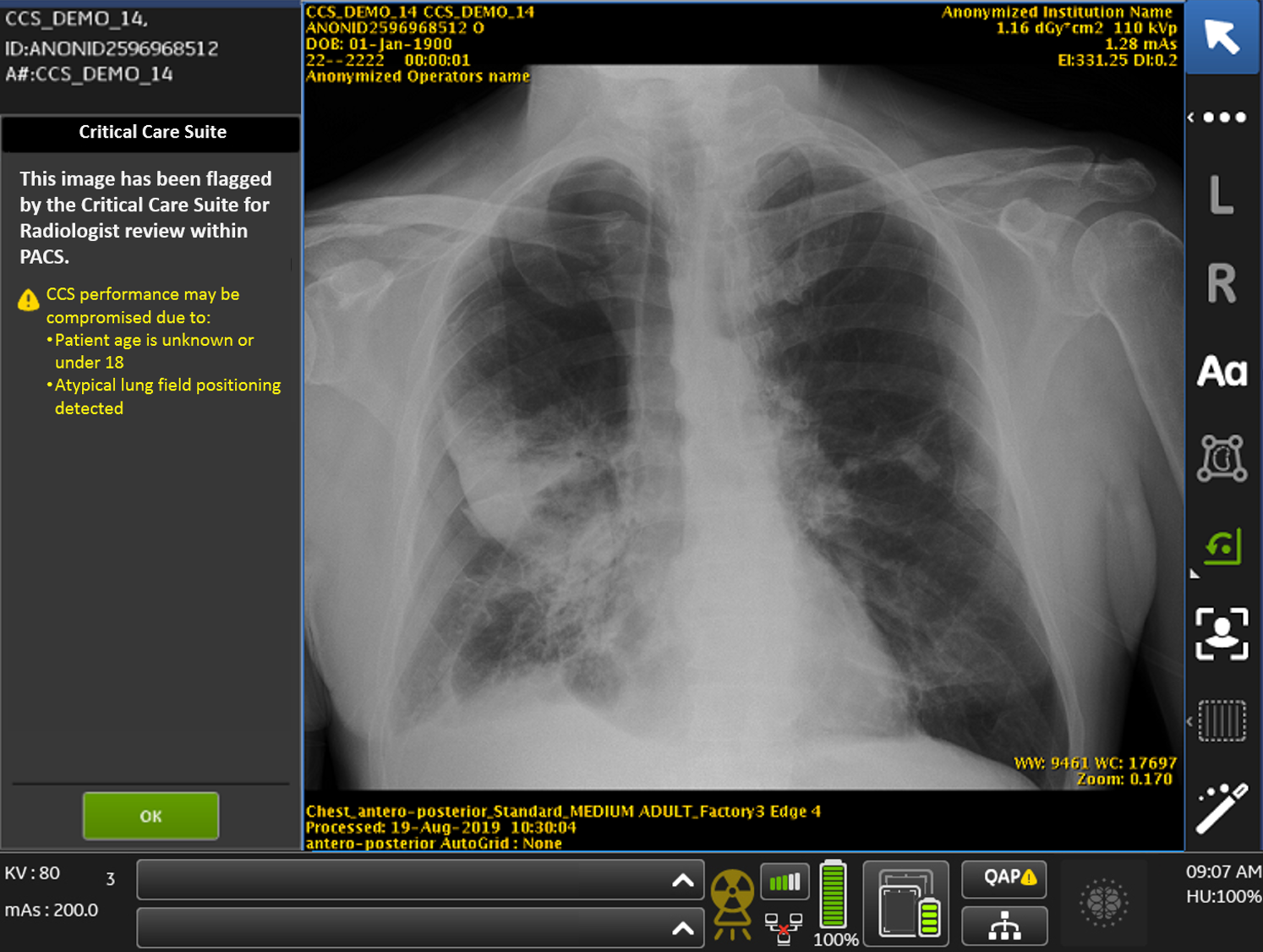

Working with GE Healthcare, Callcut helped develop the Critical Care Suite, a collection of algorithms embedded in a mobile X-ray device. Last week, the FDA cleared the AI for use. This means doctors can begin working with the software in pilot tests. Critical Care Suite should start to show up more widely in hospitals early next year. “From an engineering point of view, this is really exciting,” says Katelyn Nye, general manager of mobile radiology and AI at GE Healthcare. “We just passed a significant milestone which will begin to help technologists and radiologists do their jobs more efficiently.”

Top and above images: The Critical Care Suite will be available on GE Healthcare’s Optima XR240amx mobile X-ray machine, which means technologists can take an X-ray using artificial intelligence at a patient’s bedside. “X-ray — the world’s oldest form of medical imaging — just got a whole lot smarter, and soon, the rest of our offerings will too,” says Kieran Murphy, president and CEO of GE Healthcare. Top and above images credits: GE Healthcare

Top and above images: The Critical Care Suite will be available on GE Healthcare’s Optima XR240amx mobile X-ray machine, which means technologists can take an X-ray using artificial intelligence at a patient’s bedside. “X-ray — the world’s oldest form of medical imaging — just got a whole lot smarter, and soon, the rest of our offerings will too,” says Kieran Murphy, president and CEO of GE Healthcare. Top and above images credits: GE Healthcare

While radiologists can carefully examine one X-ray image at a time, AI can help sort through hundreds of images in minutes and call attention to anything that looks suspicious. In the case of the Critical Care Suite, the team trained the algorithm by feeding it thousands of anonymized X-ray images of healthy lungs and lungs afflicted with pneumothorax and taught the software to identify signs of the condition. The process is similar to teaching a child to read. Point to the word “cat” and say “cat” enough times and a child will learn the word.

The images used to train the algorithm were pulled from hospitals in San Francisco; Toronto; Bethlehem, Pennsylvania; and New Delhi to provide a diverse selection of X-ray scans. In India, for example, X-rays tend to be taken from further away so the patient’s arms are often visible in the scan. The algorithm learned to ignore these extra bits and focus on the lungs.

To ensure that the algorithms meet FDA standards, GE conducted a clinical trial where two board-certified radiologists reviewed 800 scans and marked whether the X-ray indicated pneumothorax and, if so, whether it was large or small. They then ran those same scans through the Critical Care Suite. The trial showed that the algorithm can spot large pneumothorax with anvAUC (area under the curve) of .99 and a small pneumothorax with an AUC of 0.94 — a measure that indicates how good a mathematical model can be at making predictions. “X-ray — the world’s oldest form of medical imaging — just got a whole lot smarter, and soon, the rest of our offerings will too,” says Kieran Murphy, president and CEO of GE Healthcare.

The Critical Care Suite will be available on GE Healthcare’s Optima XR240amx mobile X-ray machine, which means technologists can take an X-ray using AI at a patient’s bedside. The machine can process the image in seconds and quickly alert doctors if the scan looks suspicious. Radiologists can then closely examine the scan and make a diagnosis.

Critical Care Suite also comes with three additional algorithms to reduce the amount of time technologists have to spend doing quality control on an image before sending it to the radiologist.

One algorithm automatically rotates a scan into the correct position. Another ensures that the technologist has used the correct protocols (for chest instead of abdomen, for example). The third checks that no part of the lung area has been cut off in the X-ray. “We want to catch these errors at the bedside so the technologist can take another image, or reprocess the image, right away if necessary,” Nye says.

Eventually, the Critical Care Suite will encompass more algorithms that will look for other lung issues. The faster doctors can diagnose lung problems, the more quickly they can save lives.

Additional partners in the development of Critical Care Suite include Pennsylvania’s St. Luke’s University Health Network, Toronto’s Humber River Hospital and Mahajan Imaging’s Centre for Advanced Research in Imaging, Neuroscience and Genomics in India.