Star Child

The Netherlands-based company SpaceLife Origin has set a target date of 2024 to send a pregnant woman, along with a “trained, world-class medical team,” to give birth in space. Image credit: Getty Images.

The Netherlands-based company SpaceLife Origin has set a target date of 2024 to send a pregnant woman, along with a “trained, world-class medical team,” to give birth in space. Image credit: Getty Images.What is it? You’ve heard of water birth, where babies are delivered in a tub of warm water? Get ready for the 21st-century analog: space birth, where babies are delivered 250 miles above the earth’s surface.

Why does it matter? The Netherlands-based company SpaceLife Origin has set a target date of 2024 to send a pregnant woman, along with a “trained, world-class medical team,” to give birth in space: The plan is she’ll be up there for 24 to 36 hours, during which time she will deliver a baby, then return via capsule to life below. According to an article in The Atlantic by Marina Koren, the company believes that “spacefaring childbirth is part of creating an insurance policy for the human species,” if at some point we’ve so badly wrecked the planet that we’ll have to find a new home elsewhere. The company thinks its services will appeal particularly to wealthy preppers.

How does it work? It is not entirely clear, and Koren mostly explores the hazards of orbital childbirth, including regulatory roadblocks (who issues the birth certificate?), ethical considerations and possible medical complications. In microgravity, pregnant people couldn’t walk off the pain of labor, epidurals would be hard to administer, bodily fluids would float everywhere, and re-entry into the atmosphere is tough on the hardiest astronaut — to say nothing of a newborn baby and exhausted parent. And if something were to go seriously wrong? The nearest emergency room is far away.

These Heart Cells Don’t Miss A Beat

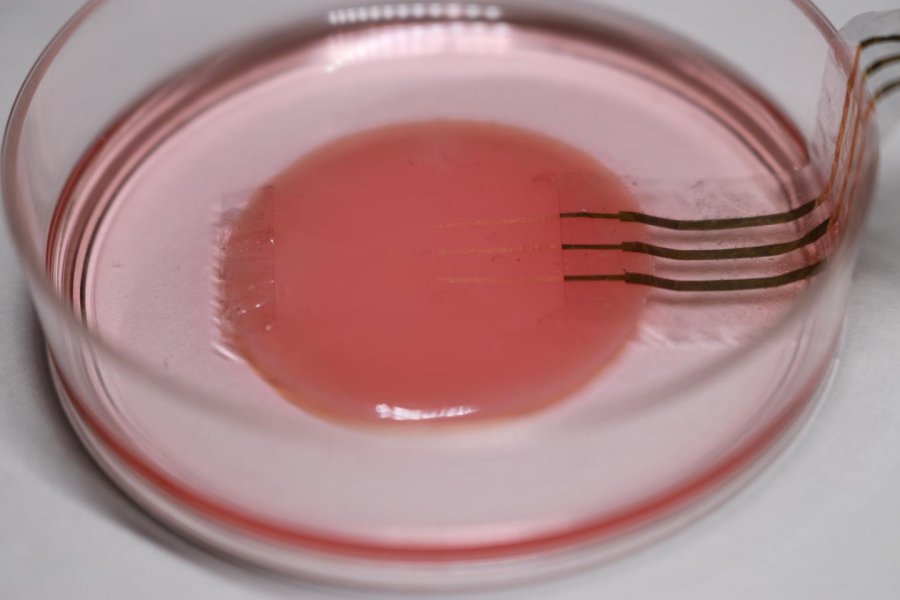

The layer of cardiomyocytes is only a few tens of micrometers thick and contracts with a force of just a few millinewtons. Caption and image credits: Someya Group.

The layer of cardiomyocytes is only a few tens of micrometers thick and contracts with a force of just a few millinewtons. Caption and image credits: Someya Group.What is it? In Japan, a collaboration between the University of Tokyo, Tokyo Women’s Medical University and the research institute RIKEN has yielded a tiny ultrasoft electronic sensor that can monitor the function of heart cells as they beat without affecting their behavior.

Why does it matter? Scientists are generally only able to study heart muscle cells, or cardiomyocytes, with rigid probes on hard petri dishes — which can affect the way they beat. This development could help scientists better understand how the cells actually function in the body and pave the way for more advanced study of other cells and organs. The delicate technology might also be used for embedded medical devices.

How does it work? Sunghoon Lee, the researcher who came up with the idea, said the sensor is made via a process called electrospinning, similar to 3D printing, which extrudes polyurethane strands into a thin sheet. After applying gold to enhance conductivity, Lee applies the tiny sensor to the cardiomyocytes very carefully. “The polyurethane strands which underlie the entire mesh sensor are 10 times thinner than a human hair. It took a lot of practice and pushed my patience to its limit, but eventually I made some working prototypes,” Lee said. “Whether it’s for drug research, heart monitors or to reduce animal testing, I can’t wait to see this device produced and used in the field. I still get a powerful feeling when I see the close-up images of those golden threads.”

AI Sorts Out Mice And Human Cancer

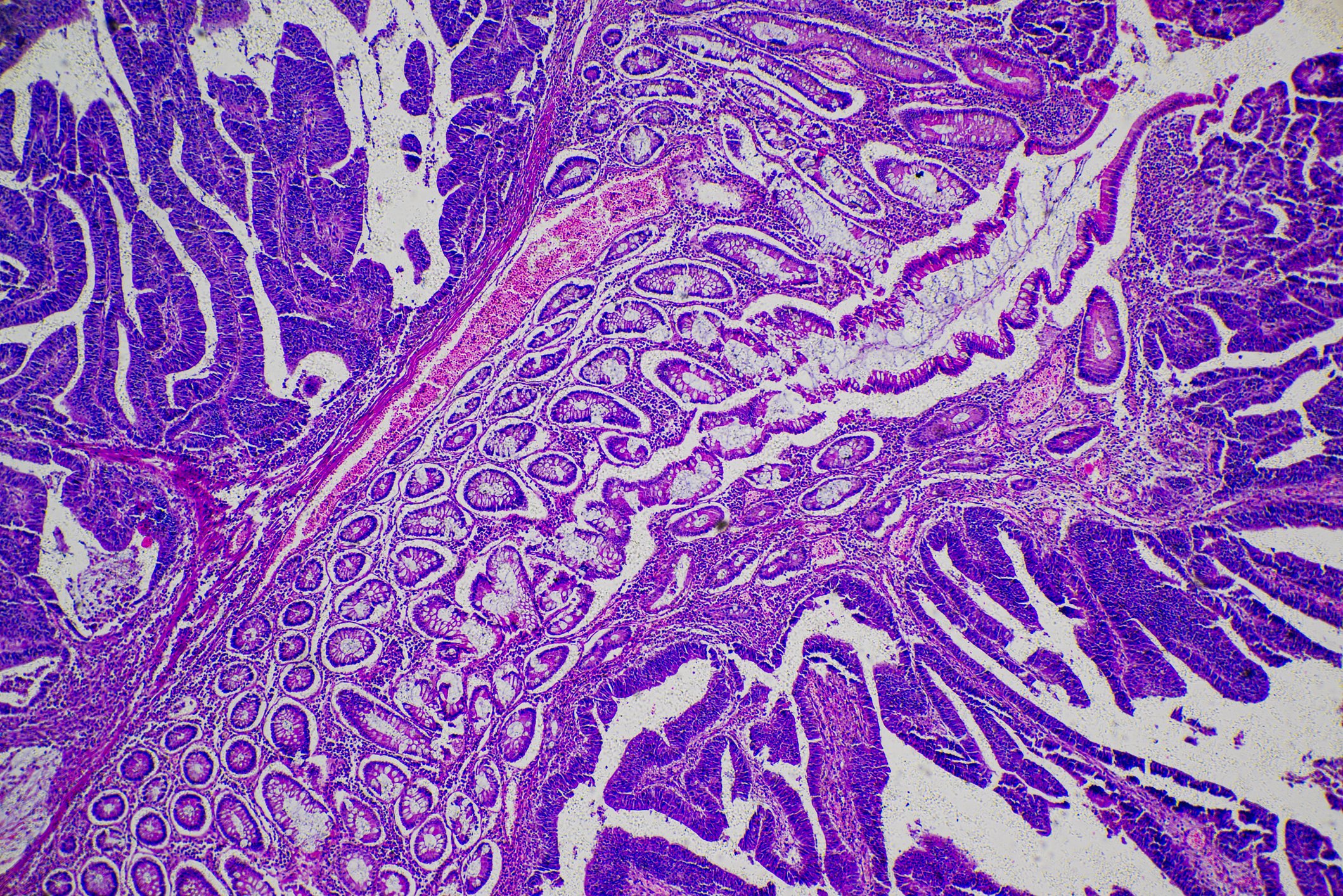

A micrograph of human tumor tissue. Image credit: Getty Images.

A micrograph of human tumor tissue. Image credit: Getty Images.What is it? Inspired by the human visual system, convolutional neural networks are a form of artificial intelligence that’s particularly adept at analyzing imagery. As they explain in Cancer Research, scientists at Japan’s Osaka University have found a way to use such AI to distinguish between different types of cancer cells.

Why does it matter? The specific types of cancer cell present can guide physicians toward the best treatment, but these cell types vary widely between people, even within the same disease, according to Osaka University. For doctors, differentiating cell types is difficult and time-consuming. For AI, it’s a relative breeze. Lead author Masayasu Toratani said, “The automation and high accuracy with which this system can identify cells should be very useful for determining exactly which cells are present in a tumor or circulating in the body of cancer patients. For example, knowing whether or not radioresistant cells are present is vital when deciding whether radiotherapy would be effective.”

How does it work? The network learned by analyzing piles of microscopic images of cancer cells from humans and mice. “We first trained our system on 8,000 images of cells obtained from a phase-contrast microscope,” said corresponding author Hideshi Ishii. “We then tested its accuracy on another 2,000 images, to see whether it had learned the features that distinguish mouse cancer cells from human ones, and radioresistant cancer cells from radiosensitive ones.” According to the university, the scientists hope this could lead to a universal system that can “automatically identify and distinguish all such cells.”

O, Say Can You See, Through Transparent 3D-Printed Materials?

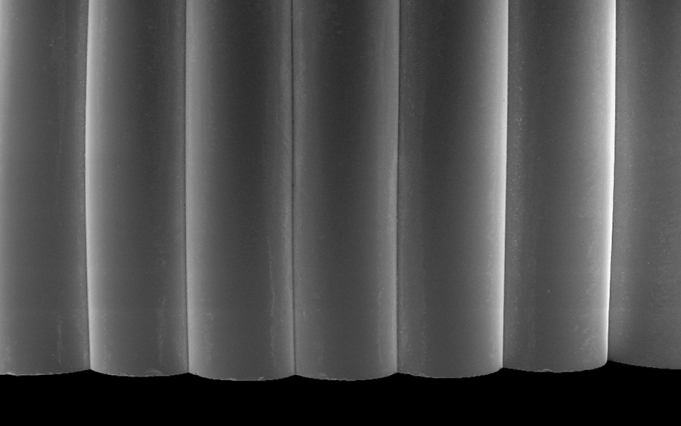

Top image: The glass 3-D printing process. Image credit: Steven Keating/MIT News. Above: Scanning electron microscope image of a sample from a printed glass prism. Image credit: James Weaver/ MIT News.

Top image: The glass 3-D printing process. Image credit: Steven Keating/MIT News. Above: Scanning electron microscope image of a sample from a printed glass prism. Image credit: James Weaver/ MIT News.What is it? Scientists from MIT’s Mediated Matter Group have developed a method for the 3D-printing of glass at “architectural scale,” paving the way for its incorporation as a better building material.

Why does it matter? As the team explains in 3D Printing and Additive Manufacturing, glass came into widespread use in windows and other household objects due to manufacturing advances during the Industrial Revolution — but because the technology hasn’t much advanced since then, “processes for the fabrication of complex geometry and custom objects with glass remain elusive.” In short, we haven’t been able to take full advantage of glass’ unique properties as a building material, including its light refraction. They write, “Combining the advantages of this [additive manufacturing] technology with the multitude of unique material properties of glass such as transparency, strength, and chemical stability, we may start to see new archetypes of multifunctional building blocks.”

How does it work? The team fabricated a set of 3-meter-tall glass columns for Milan Design Week 2017, and have returned with a full technical explanation of how they achieved it: via a “three-zone thermal control system,” including one heated box that holds the melted glass, and another container in which the object is printed.

This Computer Can Speak Your Mind

A collaboration between neuroscientists at Germany’s University of Bremen and the Netherlands’ Maastricht University asked patients during brain surgery to read certain words out loud, while matching language output with brain activity. Image credit: Getty Images.

A collaboration between neuroscientists at Germany’s University of Bremen and the Netherlands’ Maastricht University asked patients during brain surgery to read certain words out loud, while matching language output with brain activity. Image credit: Getty Images.What is it? Science magazine reports on three teams making strides in reading human brain activity, running it through artificial intelligence programs, and turning it into computer-generated speech.

Why does it matter? For folks who have lost the ability to speak due to stroke or disease, for instance, current options are limited. For example, like Stephen Hawking, they can use their eyes or make small facial motions to control a computer that can create speech on their behalf. But, according to Science, researchers are getting closer to technology that could translate brain activity more directly into speech, allowing users to have better control over their tone, for instance, or to jump spontaneously into a lively conversation.

How does it work? Very slowly and carefully. Researchers need direct access to the brain to gather the kind of data they need, and since they can’t go around asking folks willy-nilly if they can drill into their skulls, they’re left to collect info when the opportunity arises — such as on patients undergoing surgery for brain tumors or epilepsy. The three teams have been using different methods to get at their common goal. A collaboration between neuroscientists at Germany’s University of Bremen and the Netherlands’ Maastricht University, for instance, asked patients during brain surgery to read certain words out loud, while matching language output with brain activity. The team fed the data into a neural network and then asked it to generate new words based on readouts of different brain activity. About 40 percent of the time, the AI was able to generate understandable language.