Nuclear power plants can produce huge amounts of electricity while keeping their carbon footprints at relatively low levels, compared to power stations that burn fossil fuels. But building, maintaining and operating a nuclear plant is also relatively expensive. To make sure these plants remain competitive, the U.S. Department of Energy’s Advanced Research Projects Agency energy division (ARPA-E) has scientists at GE Research helping to find a way to reduce operations and maintenance costs at such facilities to a mere $2 per megawatt-hour when engineers start designing the next-generation of advanced reactors. That’s a tall order, given that operators can spend more than 10 times that amount to keep their nuclear plants up and running.

The DOE is betting that, by automating some tasks with artificial intelligence, nuclear facilities could reduce their operating expenses in the future. Last month, ARPA-E awarded grants to a group that includes GE Research, Massachusetts Institute of Technology, Argonne National Laboratory, the University of Michigan and others to conduct a three-year study on how AI and computer simulations can help monitor and maintain nuclear reactors — part of a program dubbed Generating Electricity Managed by Intelligent Nuclear Assets, or GEMINA.

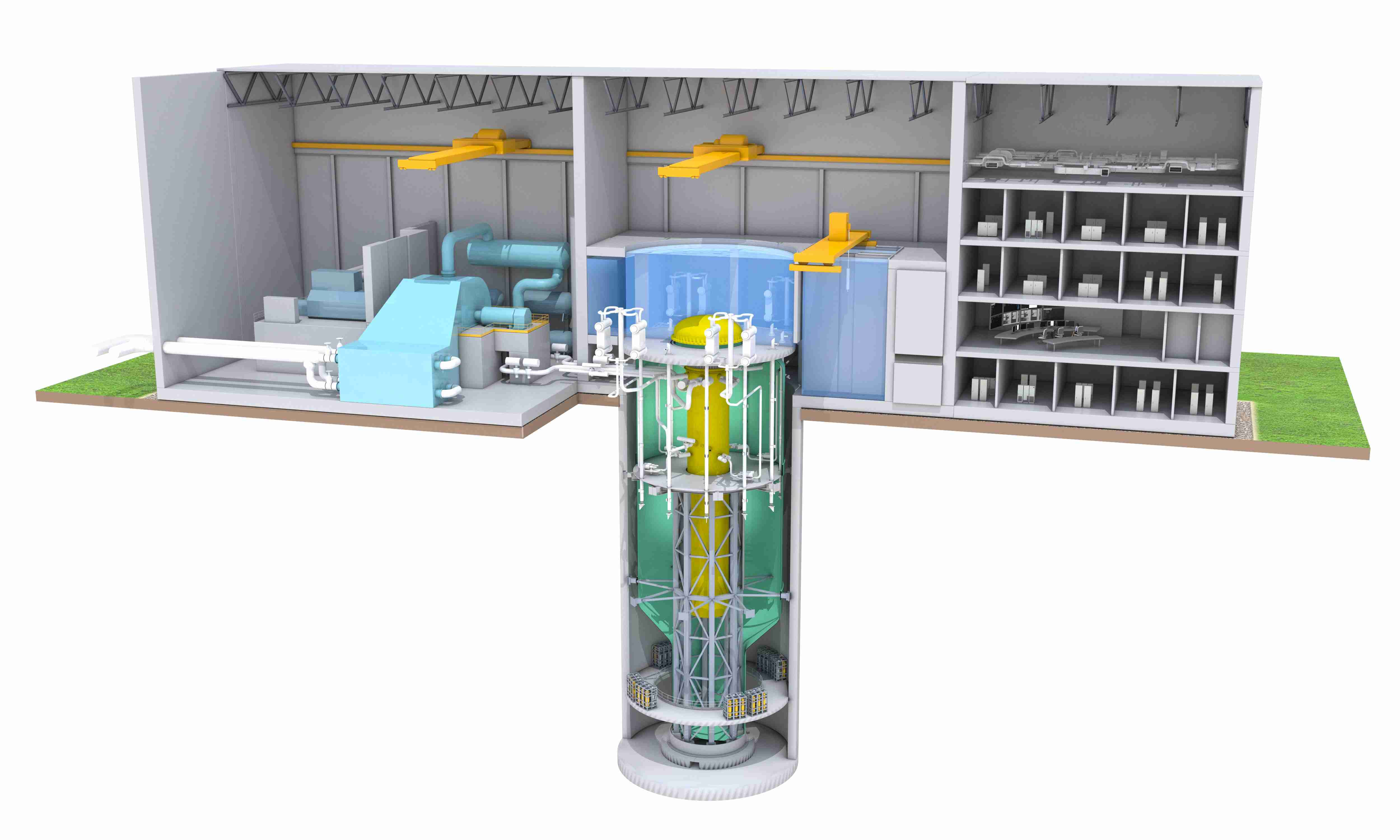

For the project, the GE Research team will use a highly detailed virtual model of a new GE Hitachi reactor. Such “digital twins,” as these models are called, gather data from many sensors located inside the plant and allow engineers to create a virtual representation of a part, machine or process. GE is already using the technology to monitor jet engines, gas turbines and many other assets and systems.

The GE Research team will use its digital twin to simulate scenarios that nuclear facilities experience in real life to determine what needs to be monitored, where sensors should be placed and how best to detect wear and other potential problems in the system, says Abhinav Saxena, a senior AI scientist at GE Research and digital twin expert.

The sensors themselves add another layer of complexity; they can also degrade or require recalibration, and the GE Research team will study how they might be monitored and maintained remotely.

Correctly analyzing all of the incoming data is paramount, says Saxena, given the nature of the energy source. That’s why the GE Research team is planning to use its “humble AI” software. Humble AI is a machine learning program GE is developing to monitor incoming information, draw conclusions based on that information and notice when certain data are missing. The AI package will then ask for more data or for human input, while also predicting the accuracy of the conclusions it has drawn — essentially telling engineers when AI might be wrong, and the likelihood of that outcome. Information about the accuracy of readouts coming from sensors adds another level of safety. Ultimately, by further developing this software package, GE hopes to provide predictive maintenance capabilities that determine whether a component needs to be fixed right away or whether maintenance can be deferred until scheduled, thereby optimizing cost and safety of maintenance operations.

Slated to begin by September, the study will test these and other technologies. Saxena says that discoveries made along the way will undoubtedly lead to cost savings for power plants in the future, and that’s really what ARPA-E is going for. “This is a very futuristic, forward-looking program,” he says.